Smart speakers are everywhere. But could we design a smart speaker to talk to us with our own voice, or that of a person we know?

(PS: the images generated with this blog post had the prompt “a voice clone, concept art, matte painting, HQ, 4k” – is that why there are peaks of mountains that resemble a times series of an audio recording??)

Real Time Voice Cloning Library

Let’s start with one of the most popular repositories on GitHub – https://github.com/CorentinJ/Real-Time-Voice-Cloning. It’s a couple of years old now (decades in neural network research) but will provide a good baseline.

Clone the repository: git clone https://github.com/CorentinJ/Real-Time-Voice-Cloning

As it’s audio, let’s use a conda environment, as we’ll need to install ffmpeg, codecs, and audio drivers. Let’s setup with Python and PyTorch. (I have a Ubuntu/Linux environment, CUDA 12, and an Nvidia GeForce RTX 3080.)

conda create --name voice-clone python

conda activate voice-clone

conda install pytorch torchvision torchaudio pytorch-cuda=11.7 -c pytorch -c nvidia

conda install ffmpeg

pip install -r requirements.txt

python demo_cli.pySome obligatory tweaks to the setup:

- Comment out

numpyin the requirements – use the already suppliednumpy.Rerunpip install -r requirements.txt. - Download models manually from the link suggested when running the

demo_cli.pyfile. Scan these for viruses using ClamAV just to check. - Install

portaudiovia conda-forge:conda install -c conda-forge portaudio.

I had issues getting access to the audio device within the GUI (graphical user interface) version. (I was taken back in a Proustian wave of memory – why is it so difficult to record sound with Python?!) But the command line demo worked.

Here is an example of my voice as recorded (very dull, just me waffling on):

And here are some examples of my “cloned” voice:

Not quite there but no horrendous. There are hints of my voice there.

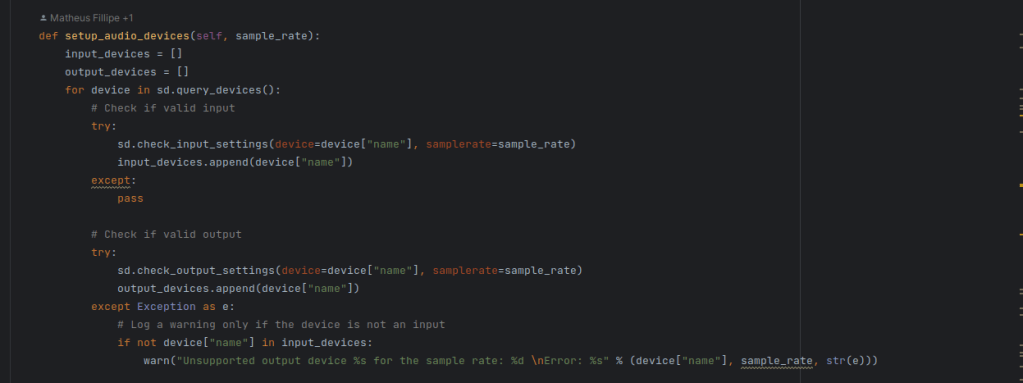

The issue with the GUI was that the synthesizer had a hardcoded sample rate of 16000 (16kHz) but my audio input and output devices all had a default sample rate of 44100 (44.1Hz). There’s probably a way I can force the devices to operate at 16kHz but life is too short. The relevant snippet of code is the toolbox.ui setup_audio_devices method as below:

Resemble AI

Corentin Jemine, who developed the above library as a Masters project, how now setup with a company called Resemble AI to attempt to commercialise voice cloning.

They have a couple of options – a newer “Playground” single-shot model and a more stable production-ready “Project” interface. A “voice” is currently being built with 25 voice samples. I’ll report back later on how that goes. The newer single-shot model can be heard below:

Ah, me as an American!

To be fair there was a warning about this. The zero- and low-shot voice cloning models are all trained on extensive American voice samples. This means they are good at cloning male American voices but Americanise anyone who isn’t American!

TTS

On GitHub, Corentin Jemine recommends checking out the TTS library. Let’s do that. The company behind this library coqui also has a commercial offering we’ll experiment with later.

To get started, pip install (try the same conda environment as above): pip install TTS.

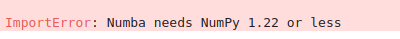

A little tweak – I needed to downgrate my numpy installation:

Downgrade to numpy 1.22.4 via: pip install numpy==1.22.4 – success!

Let’s use the same recording.wav file as before. From the library ReadMe example:

tts = TTS(model_name="tts_models/multilingual/multi-dataset/your_tts", progress_bar=True, gpu=True)

tts.tts_to_file("This is me as voice cloned. I sound a little American again.", speaker_wav="recording.wav", language="en", file_path="output.wav")Here “I” am:

This use the YourTTS model. As before to achieve single/zero-shot performance, it needs to use parameters trained on American male voices, and so the result sounds American.

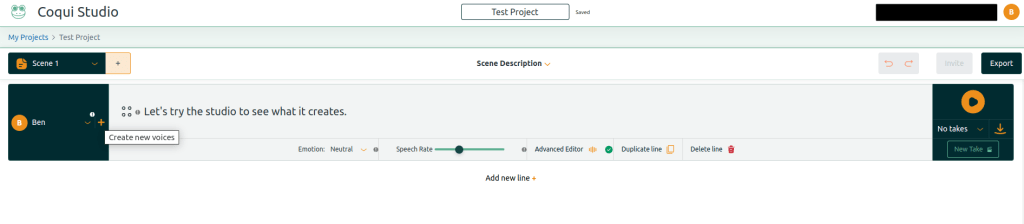

Coqui

Let’s also experiment with the Coqui Studio platform. This offers the ability to “Add a Voice”. You record a short snippet of audio:

You can then type a line and attempt to synthesis speech in that voice:

That’s not too bad. Still an American twang and a vocoder-like distortion but getting closer.

Results

Although fairly heavily distorted, the original command line cloning using the Real Time Voice Cloning Library was one of the best.

The more commercial offerings were tailored to one or zero shot implementations so the result didn’t really work, and sounded too American, losing what I think is distinctive about my own voice.

Next Steps

Fine tune an existing state-of-the-art model on voice samples I record.

This has a few issues. One, I need lots of similar samples of my voice. I also don’t know what particular utterances provide the right kind of training data. Having experimented, I don’t think single-sample synthesis is the deal-breaker many think it is, I am quite happy recording 25 samples if it produces a better quality match. Training though is a pain and an art. First, you need the data samples correctly arranged and labelled. Then you need the training environment to work and use the GPU. Then you need to train successfully.

One option is Alexa. Amazon (possibly by law?) provides your data on request. This includes everything recorded by Alexa. This could be an instant library of voice samples to clone my family. Stay tuned.

Hello Ben, thanks for this amazing article.How much memory need to run the tool box in https://github.com/CorentinJ/Real-Time-Voice-Cloning.

thanks for your help.