I was at an ambient night in Bath when the idea arrived. Dark room, low droning music, projector throwing patterns on the wall. And I thought: what if the shapes on that wall were alive? Not a visualiser in the Winamp sense — not bars bouncing or kaleidoscopes rotating — but actual creatures. Neon things that flock and drift and respond to whatever the musicians are doing.

I typed the idea into my Notion todo list on my phone, probably looking rude in the dark. Black background. Ambient neon creatures. React to the music. Stick on a projector somehow. Raspberry Pi with a microphone. Spotify on the speakers. Stars on the ceiling if I’m feeling ambitious.

Then I did something that still feels slightly sci-fi. I handed the whole thing to an AI agent and asked it to research the approaches and build it.

Twenty minutes

About twenty minutes later I had two working versions. A browser one I could open on my phone right there at the gig, and a Python version designed to run on a Raspberry Pi connected to a projector via HDMI. Both open source, sitting in a GitHub repo, ready to go.

This is ‘vibe-coding’ taken to a fairly extreme place. I’ve written about it before — the idea that you describe what you want and AI builds it. But there’s something different about doing it for something artistic rather than functional. I wasn’t describing a database schema or an API endpoint. I was describing a feeling. Neon jellyfish drifting through darkness, responding to sound. And somehow that worked.

What the creatures actually do

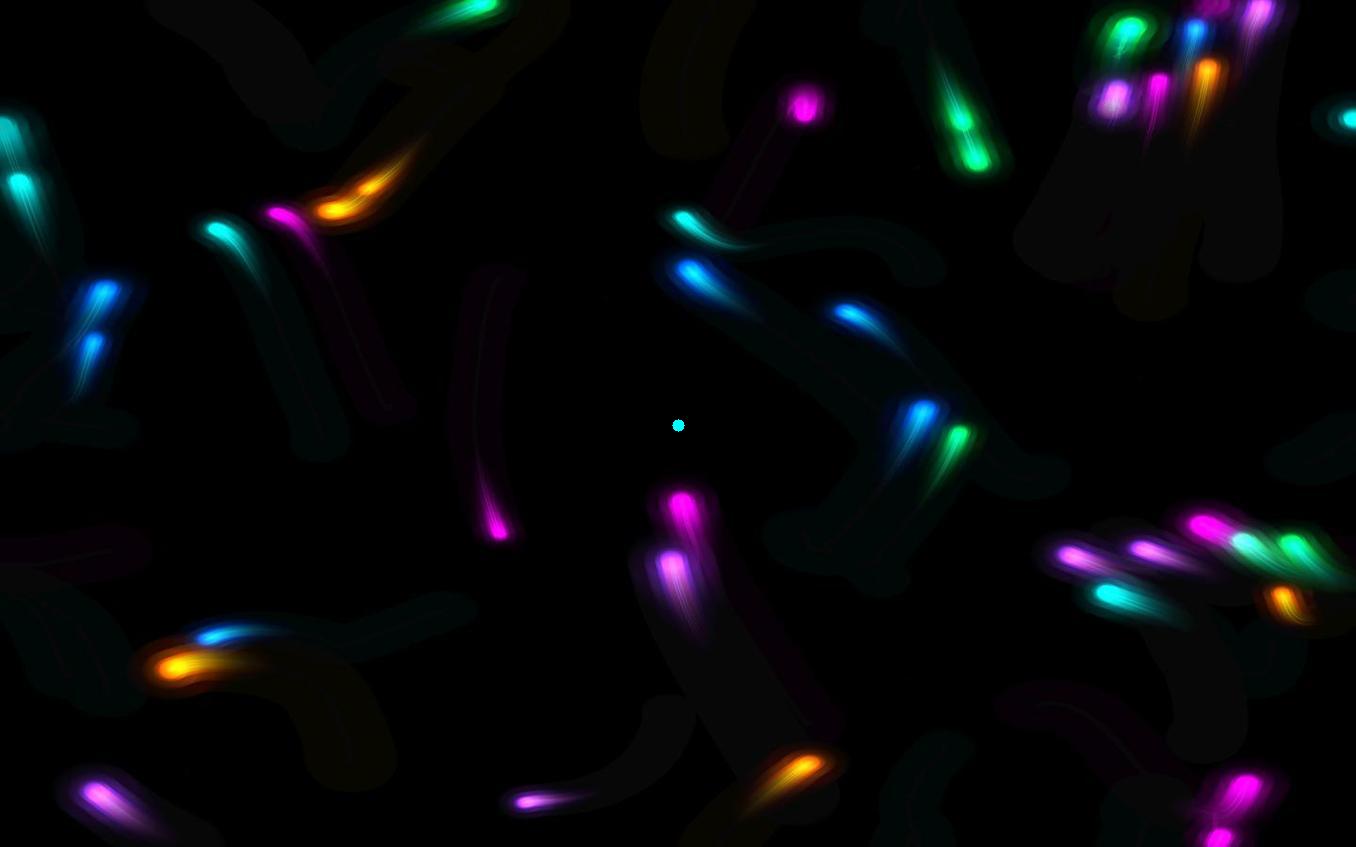

Forty or so neon plankton-like things flock across a black background. They use something called Craig Reynolds’ boids algorithm, which dates back to 1986. The idea is beautifully simple: each creature follows three rules. Stay a bit apart from your neighbours. Match their general heading. Steer towards the group. That’s it. Three rules, and you get flocking behaviour that looks genuinely organic. It’s one of those examples where complex behaviour emerges from simple parts — something that keeps showing up in nature and in code.

The audio-reactive bit uses FFT — Fast Fourier Transform — which is basically a way of splitting sound into its frequency bands. The sub-bass makes the creatures swell. Bass increases their speed. Mids make their trailing tentacles wiggle. Treble shifts the colour brightness. Overall volume controls how persistent the motion trails are. So in a quiet passage the creatures drift slowly, trailing long ghostly afterimages. When the music builds, they speed up, pulse, and the trails tighten.

The colours are neon — cyan, magenta, electric blue, lime, purple, orange — and where the creatures overlap, additive blending creates a glow. It looks like bioluminescent plankton in a dark ocean. Which is, I suppose, exactly what I was imagining in that dark room at the ambient night.

The two versions

The browser version is a single HTML file using p5.js, a creative coding framework. You open it on any phone or laptop, tap to start, grant microphone permission, and it just works. This is the one I tried at the actual ambient night, about twenty minutes after having the idea. Full circle. Possibly the fastest I’ve ever gone from ‘I wonder if…’ to ‘oh, it actually works’.

The Pi version uses pygame and pyaudio in Python, designed to run fullscreen via HDMI to a projector. USB microphone picks up whatever’s playing in the room. It falls back to a demo mode if there’s no mic plugged in, and you can set it up with systemd to auto-start on boot, so the Pi becomes a dedicated ambient creature box. Plug it in, point the projector at a wall, put some music on.

Three more modes

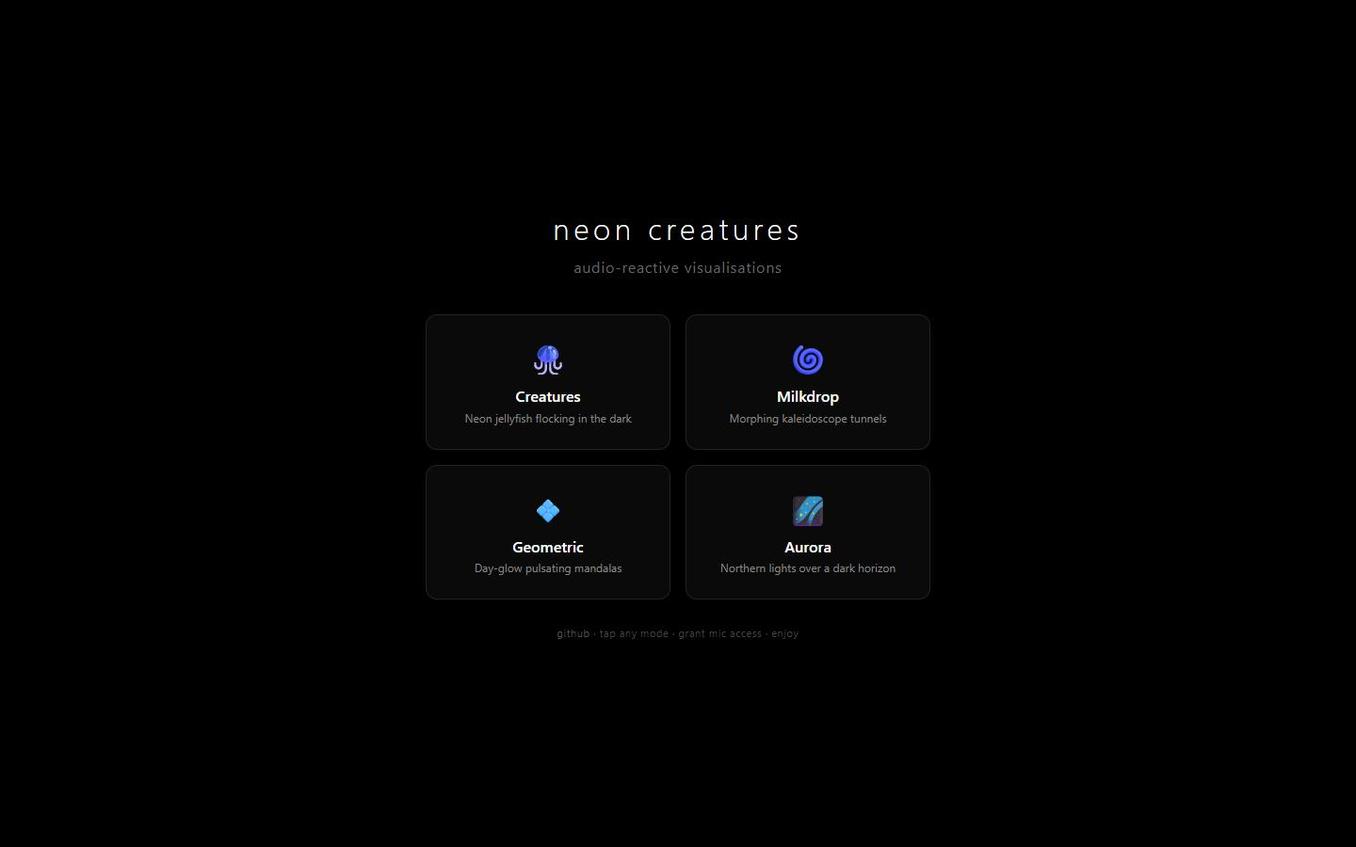

The creatures were the starting point, but once you have the architecture — p5.js, microphone FFT, black canvas, additive blending — it becomes a platform for different kinds of audio-reactive art. So I added three more modes. You can switch between them from a landing page that presents all four as cards on a dark background. Tap any mode, grant mic access, enjoy.

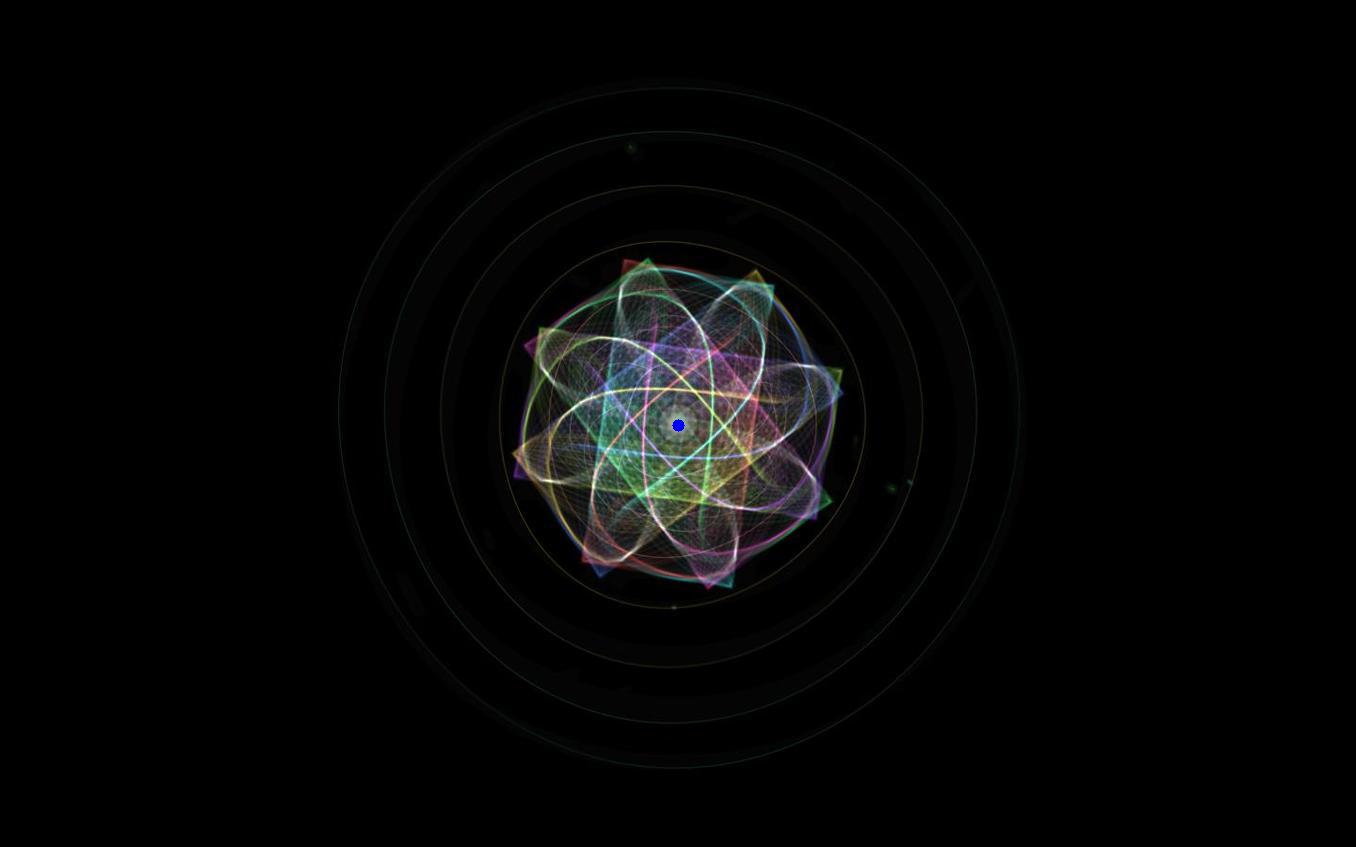

Milkdrop

This one is a nod to the classic Winamp Milkdrop visualiser that defined music-on-a-computer in the early 2000s. Morphing kaleidoscope tunnels with 8-fold symmetry, built from Lissajous curves that slowly evolve their parameters over time. Concentric tunnel rings pulse outward from the centre. A waveform oscilloscope traces around the middle. Spectrum analysis drives colour bands radiating from the core, and particles drift in from the edges when the treble spikes. The whole thing rotates and morphs — treble drives the hue shifting, bass controls the tunnel depth, and the Lissajous parameters quietly evolve so it never repeats the same pattern. It’s the most psychedelic of the four modes — closest to the old Winamp spirit but with modern smoothing and additive glow.

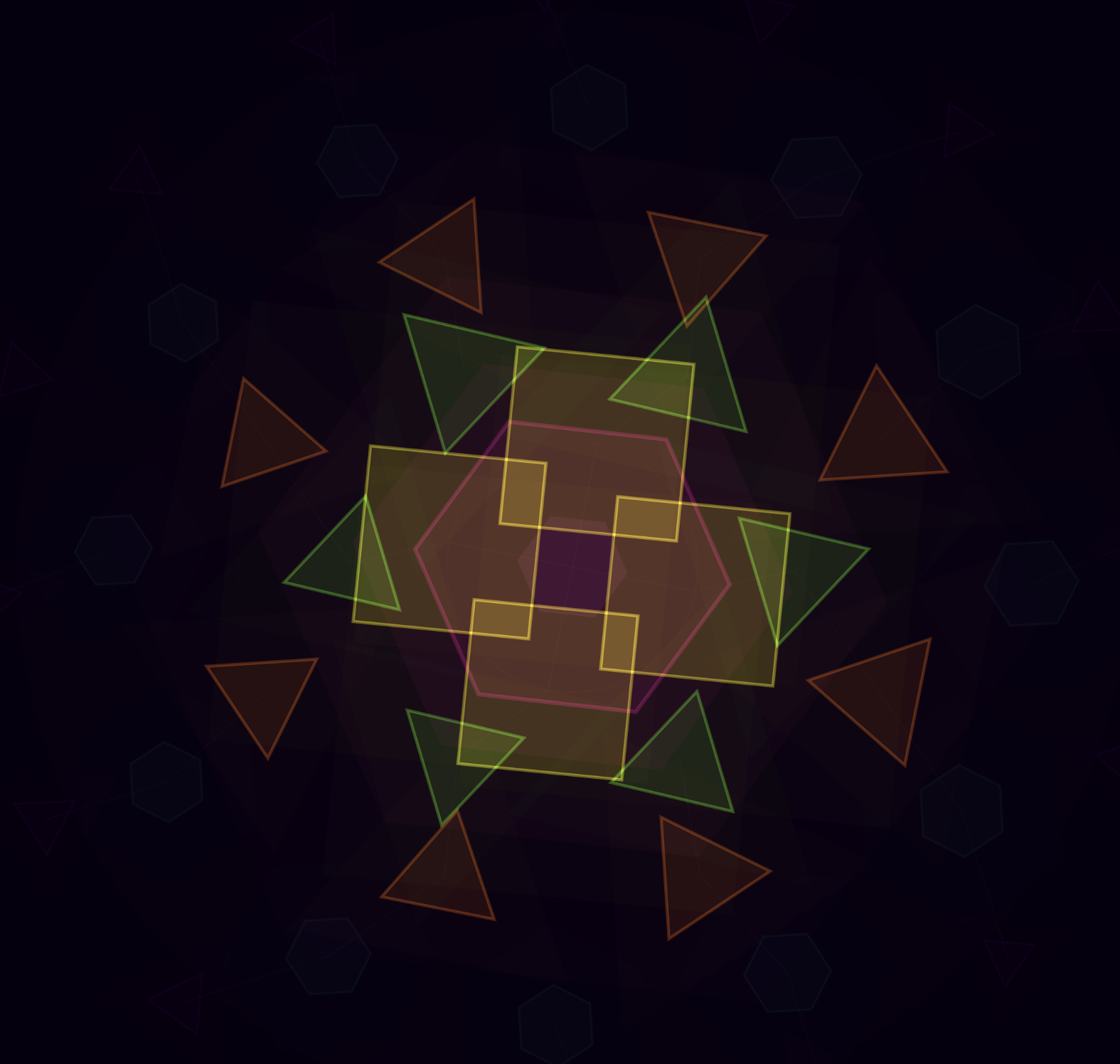

Geometric

Day-glow pulsating mandalas. Concentric rings of geometric shapes — triangles, squares, pentagons, hexagons — each ring rotating at a different speed, all arranged in radial symmetry around a glowing centre. The palette is fluorescent: hot pink, electric yellow, neon green, fluorescent orange, vivid cyan, acid purple. Bass pulses the central hexagon and drives the overall scale. Mids control how fast the rings rotate. Treble shifts the brightness. And when there’s a beat — a sharp jump in amplitude — the mandala spawns a burst of bloom particles that expand outward like a shockwave, fading as they go. There’s something deeply satisfying about watching geometric shapes breathe with music. It sits somewhere between sacred geometry and a rave.

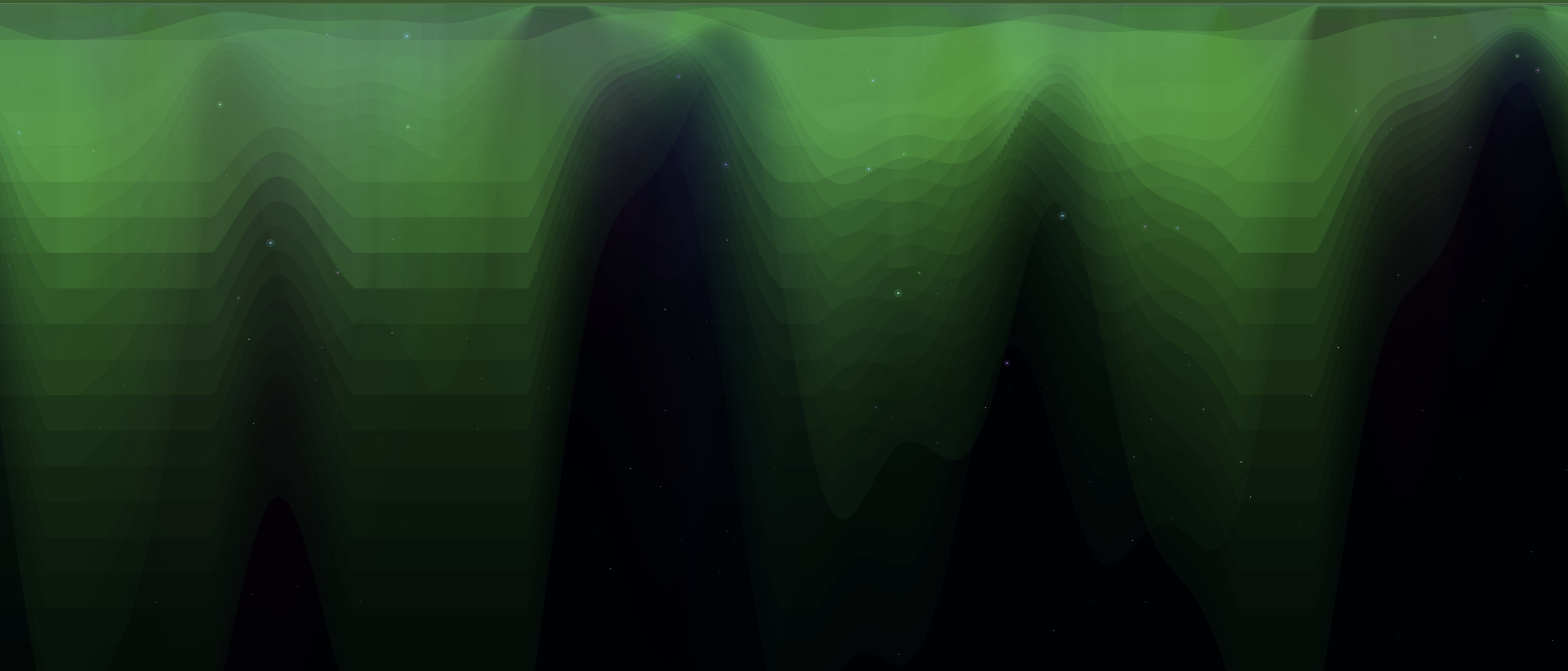

Aurora

Northern lights over a dark horizon. This is the most atmospheric of the four — a deep space starfield with layered curtains of colour sweeping across the sky. Five aurora layers, each driven by different frequency bands: the main green curtains respond to bass, blue and purple curtains follow the treble, and a faint pink curtain at the back reacts to overall amplitude. The curtains are built from Perlin noise, giving them that organic rippling quality. Below sits a procedural terrain of dark rolling hills. Stars twinkle overhead, their brightness modulated by treble. In a quiet room with ambient music playing, this one is genuinely calming. It looks like standing somewhere in northern Scandinavia watching the sky move.

The strange bit

What I find interesting is the tension in using AI to create something that’s meant to feel organic and alive. The boids algorithm is itself a kind of artificial life. Simple rules producing emergent complexity that looks natural. And here I am using an AI to write the code that simulates that artificial life. There’s a nesting-doll quality to it. Artificial intelligence writing artificial life, reacting to music made by humans in a room.

I’m honestly not sure how I feel about the creative authorship question here. I had the idea. I described it. The AI researched the approaches, chose the tools (p5.js for the browser, pygame for the Pi), implemented the flocking, the FFT analysis, the colour mapping, the spring physics on the tentacles. I looked at the result and said ‘yes, that’s the thing I was imagining’. Is that ‘vibe-coding’ or is it something more like directing? Or commissioning?

Maybe the distinction doesn’t matter much. The creatures exist. They react to music in a dark room. Watching them is genuinely meditative — something about flocking behaviour holds your attention in the way that watching starlings or fish does. Your brain wants to find the pattern. There’s a pattern there, sort of, but it keeps shifting.

Try it

The whole project is on GitHub. The browser version — all four modes — is live here. The Pi version needs a bit of setup but nothing too fiddly. If you’ve got a projector and a dark room and some ambient music, I’d be curious to know how it feels in your space.

I keep thinking there’s more to do with this. Different creature types. Different mappings between frequency and behaviour. Maybe creatures that evolve over a long listening session, adapting to the kind of music being played. I don’t know. It started as a scribbled note on a phone at a gig, and now it’s four different audio-reactive worlds on a wall. Where it goes next probably depends on the next idea that arrives in a dark room somewhere.

(Published with help from Perplexity Computer so I actually publish! You can tell from the em-dashes – but it’s pretty good, has been “Benified” and is better than no post or doing at all!)