An excellent Bath Digital Festival for 2025. In true BDF mode, I got AI to help me write up the festival - it still took an hour and is my well-being any better?

Cognitive Diversity: Exploring Life with Aphantasia

Here I explore aphantasia, an inability to generate mental images across senses. I reflect on its impact on creativity, memory, emotional connection, and career paths, emphasizing cognitive diversity.

Alternative Lords Prayer

An alternative version of the Lord’s Prayer that feels more true to me.

Maximizing 750 Words for Journalling and Creativity

The post discusses my experience with 750words for daily writing, detailing its applications in journalling, creative writing, and projects, while exploring the integration of LLMs to enhance feedback and insights.

First Impressions: Civilization 7’s Evolution and Growing Pains

Civilization 7 offers intriguing innovations in diplomacy, military mechanics, and visuals, but faces challenges with interface clutter, unclear mechanics, and balance between strategic depth and accessibility that need addressing.

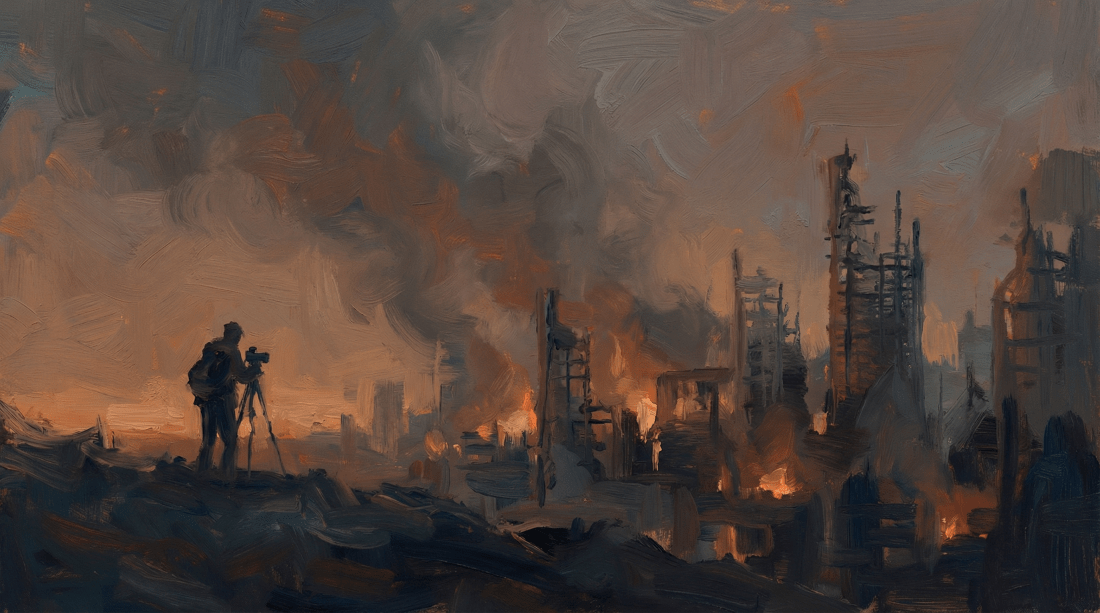

Civil War: Not the Film You Think It Is

Civil War isn't the political thriller you expect. It's about witnessing, voyeurism, and the cost of documenting violence.

TherapyBot: Exploring Knowledge Graphs and LLMs in Chat-Based Applications

Introduction In my spare time I'm trying to make some progress on a coding project called TherapyBot. Despite its name, it's not strictly about therapy - that's just one potential use case. The main aim? To test out some ideas and, let's be honest, to level up my coding skills. What's TherapyBot, anyway? At its … Continue reading TherapyBot: Exploring Knowledge Graphs and LLMs in Chat-Based Applications

Poet

The author expresses a deep yearning to be a poet, reflecting on the creative process and societal expectations. They grapple with the practicalities of a poetic career, the pressures of performance, and the challenges of convention versus freedom. Ultimately, the desire stems from a love for words and self-expression, amid uncertainties.

Antecedence Matters

Antecedence matters. In the Bible, John 10:22-42 describes Jesus being stoned for blasphemy. Modern translations have Jesus calling himself "the son of God". But it can equally be "a" "son of God". When he is being stoned for blasphemy for calling himself "son of God", modern translations say: 34 Jesus replied, “It is written in … Continue reading Antecedence Matters

A (Working) CI/CD Workflow with GitHub Actions for AI Applications

The post shares their journey of setting up a cost-effective CI/CD pipeline for AI applications using GitHub Actions and Docker. Emphasizing branching, versioning, and meticulous testing, the post highlights the importance of simplicity, efficiency, and automation in achieving a streamlined development workflow.