Some notes from my project to create self-coding software. The post emphasises the power of testing, "good code" practices, pain points, and cool features we can leverage. These include GitHub/IDE code review GUIs, tools like Ruff and Sphinx, and Test Driven Development, modularity, code duplication, and profiling.

Self-Coding Repository – Idea Update

The author is developing a self-coding project using Large Language Models (LLMs), such as GPT3.5/4-turbo and GPT4, to generate code, tests, and documentation. Existing project files are used as LLM prompt context. Evaluation tools from the Python ecosystem, GitHub API and function calling options are leveraged to streamline the process. The system has been designed to cater for various programming tasks. However, tweaking different agent "personalities" and effectively managing complex project directories remain challenges.

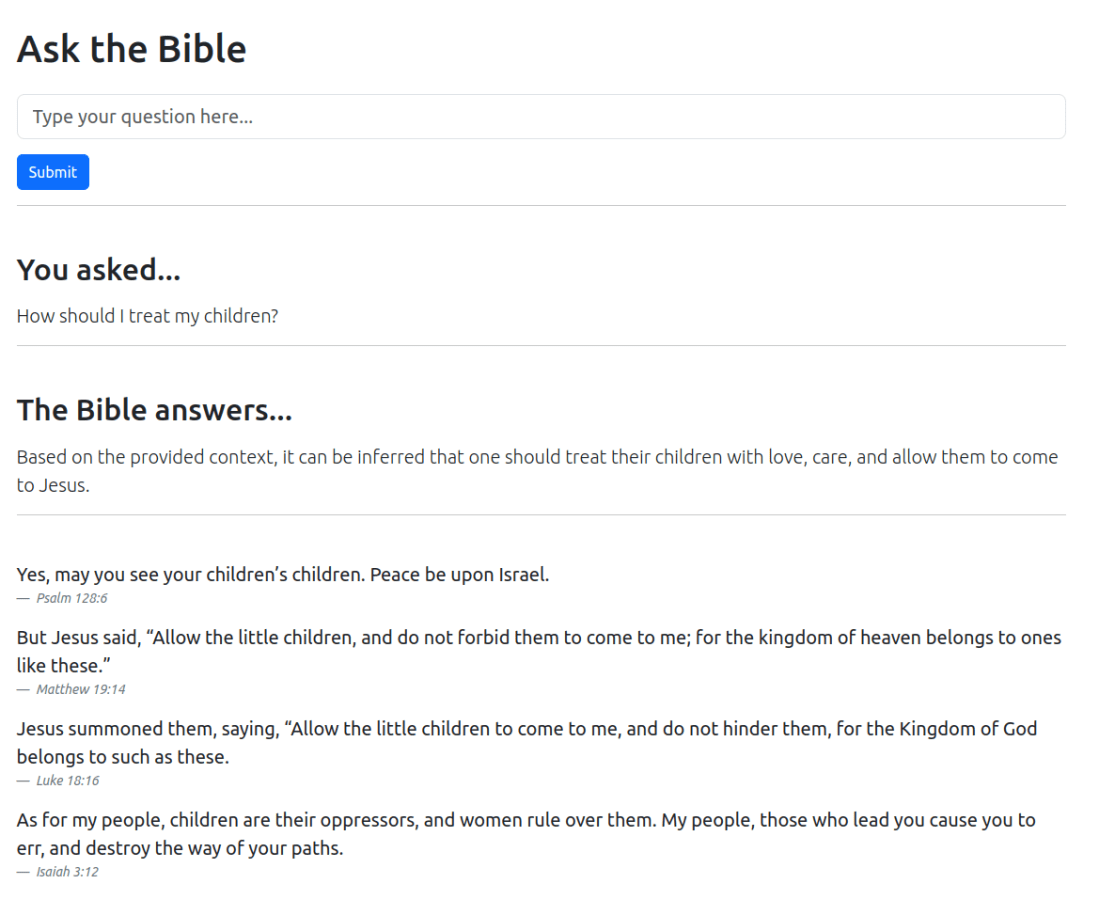

Building a Bible & Quran QA System with Langchain and Flask

If I had lived in the C14, I would have liked to be a monk. This post channels my inner monk to explain how you can build a proof-of-concept exegesis system on the Bible and Quran with langchain.

Cool Personal Datasets for Amateur Data Analysis

I’m an amateur data nerd. Problem is it’s hard to find good datasets that are personally relevant. However, it’s super easy to download some juicy data archives for data crunching at home. Here is a short guide to do this.

Analysing Your Tweets with ChatGPT

This post dives into applying ChatGPT's new Code Interpreter / Advanced Data Analysis to downloaded Twitter data. Keep reading for an insight into the cool things you can do.

Coding Agent: Automating Software Development with LLM

[Spoiler - I got ChatGPT to write this blog post based on an auto-generated Readme file summary of an auto-generated code repository. This is what the future of coding will look like. More nested generations than a Christopher Nolan movie.] Software development is a complex and time-consuming process. With the advent of Artificial Intelligence, automating … Continue reading Coding Agent: Automating Software Development with LLM

Speeding Up API Development

Most of my projects these days involve building a web API. Building a web API using REST principles allows you to bolt on multiple different front-ends (e.g., React app or iOS/Android app) and easily access functions from other computers. But building the infrastructure of the API often takes time, time I'd really like to spend … Continue reading Speeding Up API Development

Organising SQLAlchemy Base and Models in FastAPI Projects

Introduction When working with FastAPI and SQLAlchemy, you may encounter an issue where your tables are not created in the database even though your code seems to be correctly set up. A common reason for this is having multiple instances of declarative_base instead of a single shared instance. In this blog post, we'll walk you … Continue reading Organising SQLAlchemy Base and Models in FastAPI Projects

Adventures in Voice Cloning

Smart speakers are everywhere. But could we design a smart speaker to talk to us with our own voice, or that of a person we know?

Frequently Asked Questions

Or: me versus ChatGPT. Or: “I am not an LLM”